When should you use a one-tailed test versus a 2 tailed test?

Emma Jordan

Published Feb 15, 2026

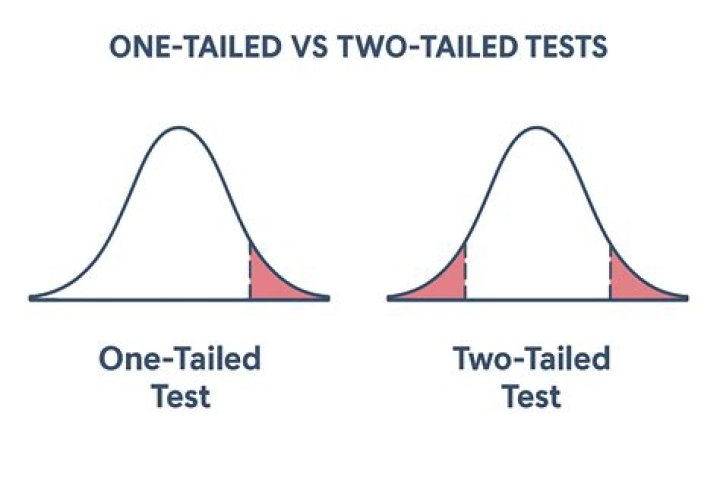

This is because a two-tailed test uses both the positive and negative tails of the distribution. In other words, it tests for the possibility of positive or negative differences. A one-tailed test is appropriate if you only want to determine if there is a difference between groups in a specific direction.

How do you determine if it’s a one-tailed or two tail test?

A one-tailed test has the entire 5% of the alpha level in one tail (in either the left, or the right tail). A two-tailed test splits your alpha level in half (as in the image to the left). Let’s say you’re working with the standard alpha level of 0.5 (5%). A two tailed test will have half of this (2.5%) in each tail.

When should a one-tailed test be used?

So when is a one-tailed test appropriate? If you consider the consequences of missing an effect in the untested direction and conclude that they are negligible and in no way irresponsible or unethical, then you can proceed with a one-tailed test. For example, imagine again that you have developed a new drug.

What is a one-tailed t test used for?

When using a one-tailed test, the analyst is testing for the possibility of the relationship in one direction of interest, and completely disregarding the possibility of a relationship in another direction. Using our example above, the analyst is interested in whether a portfolio’s return is greater than the market’s.

What is the disadvantage of one-tailed tests over two-tailed tests?

The disadvantage of one-tailed tests is that they have no statistical power to detect an effect in the other direction. As part of your pre-study planning process, determine whether you’ll use the one- or two-tailed version of a hypothesis test.

What are the arguments for not using one-tailed tests?

You use a one-tailed test to improve the test’s ability to learn whether the new vaccine is better. However, that’s unethical because the test cannot determine whether it is less effective. You risk missing valuable information by testing in only one direction.

What is the disadvantage of one tailed tests over two tailed tests?

What is the difference between Type 1 and Type 2 error?

Type 1 error, in statistical hypothesis testing, is the error caused by rejecting a null hypothesis when it is true. Type II error is the error that occurs when the null hypothesis is accepted when it is not true.

What are the arguments for not using one tailed tests?

What is the advantage of one-tailed tests over two tailed tests?

“The benefit to using a one-tailed test is that it requires fewer subjects to reach significance. A two-tailed test splits your significance level and applies it in both directions. Thus, each direction is only half as strong as a one-tailed test, which puts all the significance in one direction.

What is the disadvantage of one-tailed tests over two tailed tests?

Is it easier to reject the null hypothesis with a one-tailed or two tailed test?

It is easier to reject the null hypothesis with a one-tailed than with a two-tailed test as long as the effect is in the specified direction. Probability values for one-tailed tests are one half the value for two-tailed tests as long as the effect is in the specified direction.

What is worse a Type 1 or Type 2 error?

Hence, many textbooks and instructors will say that the Type 1 (false positive) is worse than a Type 2 (false negative) error. The rationale boils down to the idea that if you stick to the status quo or default assumption, at least you’re not making things worse. And in many cases, that’s true.

Which one is worse type1 or type 2 error?

The short answer to this question is that it really depends on the situation. In some cases, a Type I error is preferable to a Type II error, but in other applications, a Type I error is more dangerous to make than a Type II error.

Why is the T value same for 90% two tail and 95% one tail test?

The short answer is: because they answer different questions, one being more concrete than the other. The one-tailed question limits the values we are interested in, so the same statistic now has a different inferential meaning, resulting in lower error probability, hence higher observed significance.

What is the relationship between Type 1 and Type 2 error?

A type I error (false-positive) occurs if an investigator rejects a null hypothesis that is actually true in the population; a type II error (false-negative) occurs if the investigator fails to reject a null hypothesis that is actually false in the population.

What is an example of a type 1 error?

Examples of Type I Errors The null hypothesis is that the person is innocent, while the alternative is guilty. A Type I error in this case would mean that the person is not found innocent and is sent to jail, despite actually being innocent.

What is the difference between a Type 1 and Type 2 error?

In statistics, a Type I error means rejecting the null hypothesis when it’s actually true, while a Type II error means failing to reject the null hypothesis when it’s actually false.

Is false positive type 1 error?

Understanding Type I errors The null hypothesis is a general statement or default position that there is no relationship between two measured phenomena. Simply put, type 1 errors are “false positives” – they happen when the tester validates a statistically significant difference even though there isn’t one.