What is a decision tree explain with example?

Andrew Mclaughlin

Published Feb 21, 2026

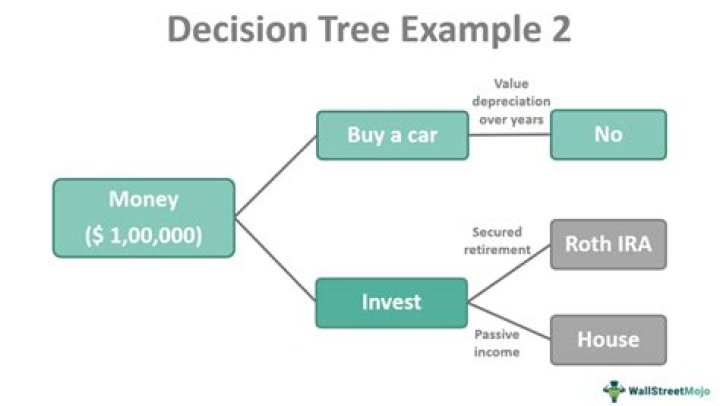

A decision tree is a very specific type of probability tree that enables you to make a decision about some kind of process. For example, you might want to choose between manufacturing item A or item B, or investing in choice 1, choice 2, or choice 3.

How do you write a decision tree example?

How do you create a decision tree?

- Start with your overarching objective/“big decision” at the top (root)

- Draw your arrows.

- Attach leaf nodes at the end of your branches.

- Determine the odds of success of each decision point.

- Evaluate risk vs reward.

What are decision trees explain the decision tree with the help of example?

A decision tree is a flowchart-like structure in which each internal node represents a “test” on an attribute (e.g. whether a coin flip comes up heads or tails), each branch represents the outcome of the test, and each leaf node represents a class label (decision taken after computing all attributes).

What is decision tree in machine learning with example?

A decision tree is a flowchart-like structure in which each internal node represents a test on a feature (e.g. whether a coin flip comes up heads or tails) , each leaf node represents a class label (decision taken after computing all features) and branches represent conjunctions of features that lead to those class …

What is simple decision tree?

A Simple Example Decision trees are made up of decision nodes and leaf nodes. In the decision tree below we start with the top-most box which represents the root of the tree (a decision node). After splitting the data by width (X1) less than 5.3 we get two leaf nodes with 5 items in each node.

Which is the best decision tree algorithm?

The ID3 algorithm builds decision trees using a top-down greedy search approach through the space of possible branches with no backtracking. A greedy algorithm, as the name suggests, always makes the choice that seems to be the best at that moment.

What are the two classifications of trees?

Broadly, trees are grouped into two primary categories: deciduous and coniferous.

What is entropy in decision tree?

Entropy. A decision tree is built top-down from a root node and involves partitioning the data into subsets that contain instances with similar values (homogenous). ID3 algorithm uses entropy to calculate the homogeneity of a sample.

How do you analyze a decision tree?

How to Use a Decision Tree in Project Management

- Identify Each of Your Options. The first step is to identify each of the options before you.

- Forecast Potential Outcomes for Each Option.

- Thoroughly Analyze Each Potential Result.

- Optimize Your Actions Accordingly.

Which algorithm is used for decision tree?

In order to build a tree, we use the CART algorithm, which stands for Classification and Regression Tree algorithm. A decision tree simply asks a question, and based on the answer (Yes/No), it further split the tree into subtrees.

What are the 3 main types of trees?

Types of Trees

- Deciduous Trees. Photo by: Kundan Ramisetti.

- Broad-leaf Trees. The leaves of these trees are broad; therefore they are occasionally termed as broad leaf trees.

- Some of the Well Known Deciduous Trees.

- Oak Trees (Deciduous)

- Ash Trees (Deciduous)

- Big Trees.

- Willow Trees (Deciduous)

- Shade Trees (Deciduous)

How is entropy used in decision trees?

ID3 algorithm uses entropy to calculate the homogeneity of a sample. If the sample is completely homogeneous the entropy is zero and if the sample is an equally divided it has entropy of one. The information gain is based on the decrease in entropy after a dataset is split on an attribute.